Facebook is rolling out a new tool called Test and Learn for Facebook advertisers.

In a very general sense, Test and Learn allows you to run tests of your advertising to find what’s working for your business.

Let’s take a closer look at what this is and how you might use it.

Test and Learn: Overview

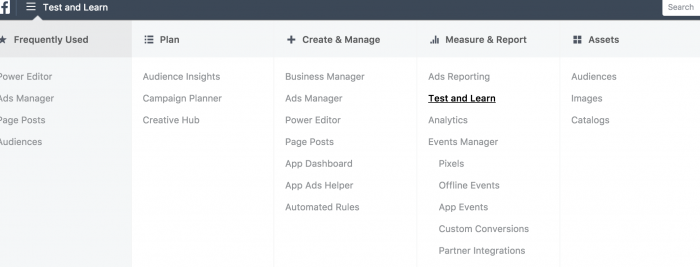

To access Test and Learn, you’ll find it under Measure & Report in your advertising menu.

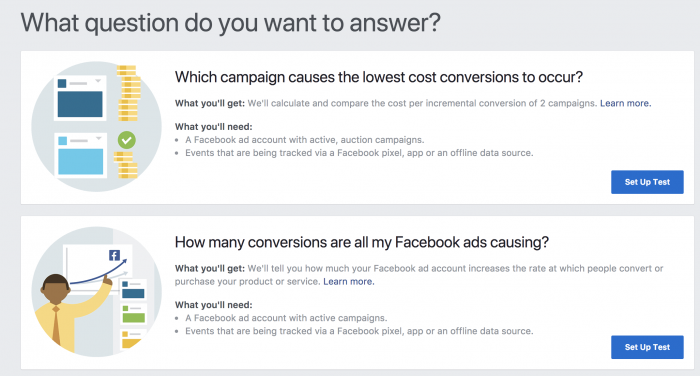

To guide you, Facebook asks “What question do you want to answer?” Right now, there are only two such questions…

One can assume more questions will be added as this tool develops. It also appears that you can request specific tests that aren’t listed from your ad rep (if you have one).

Essentially, you are able to create two types of tests:

- Campaign Comparison Test

- Account Test

Campaign Comparison Tests allow you to compare two campaigns (obviously) to determine which results in the lowest cost conversions.

Account Tests help uncover how much your ad accounts lift sales for your business.

Both are actually much more complicated than that, but this is the short definition. Let’s take on each test separately.

Campaign Comparison Test: Overview

This test will allow you to uncover which of two similar campaigns resulted in more conversions at a lower cost.

I know what you’re thinking because I was, too: “But can’t you just see that in the ad reports by comparing Cost Per Conversion?” This is actually different.

The difference: This test utilizes Facebook’s Conversion Lift measurement. This is how it works…

1. Test Group 1: Random sampling of people who saw ads from the first campaign during the test period.

2. Test Group 2: Random sampling of people who saw ads from the second campaign during the test period.

3. Control Group 1: Random sampling of people in the target audience who were held back from seeing ads in the first campaign during the test.

4. Control Group 2: Random sampling of people in the target audience who were held back from seeing ads in the second campaign during the test.

Facebook then compares how the test groups compared to the respective control groups.

Keep in mind that, unlike when viewing your results in Ads Manager, this test does not rely on an attribution window. By default, a conversion is reported when someone views an ad and converts within a day or clicks an ad and converts within 7 days.

That’s not involved here. In this case, Facebook only cares who saw the ad, who didn’t, and how many conversions resulted from the people who saw or didn’t see the ad. That’s the conversion lift.

The truth is that some of the people in your target audience may have converted anyway — especially if they are part of a warm audience. Or maybe someone saw an ad and converted more than a day later. Ad reports don’t account for these scenarios. This test will.

Read this for more on Facebook’s testing methodology for the Campaign Comparison Test.

Campaign Comparison Test: Set Up

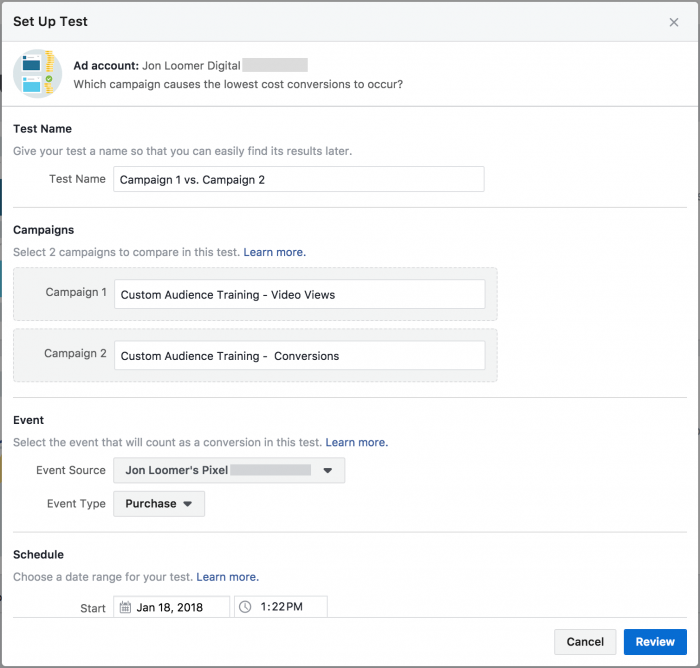

Now that you understand what this is, let’s set up a Campaign Comparison Test.

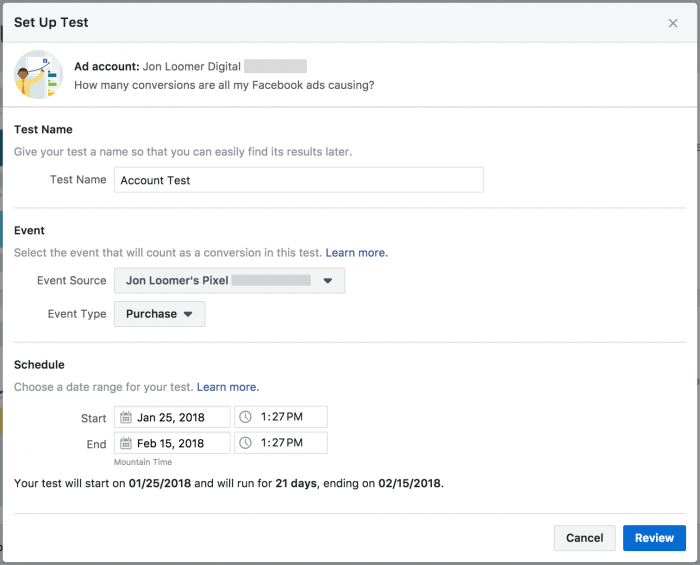

You’ll need to provide the following…

1. Two campaigns. Ideally, they’ll be very similar, minus one major difference (objective, optimization, bidding, placement, creative).

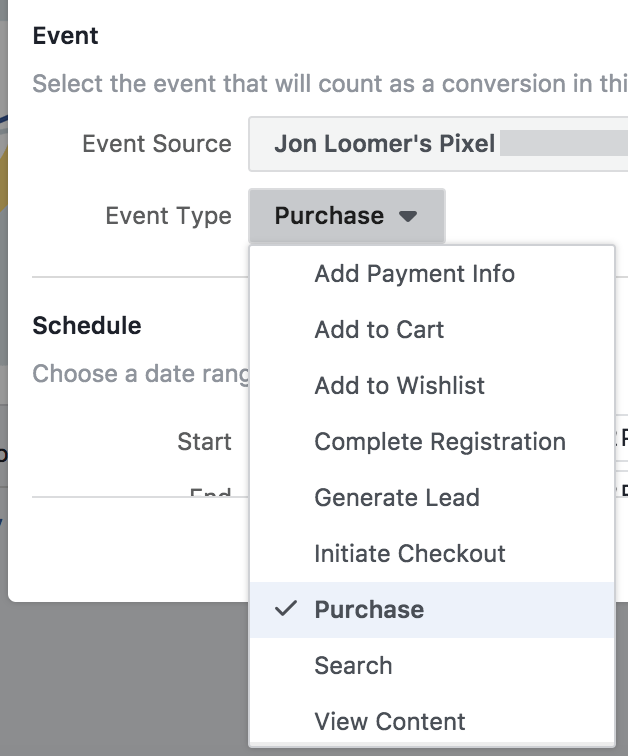

2. Event source. This is the type of conversion event that you want to track.

3. Schedule. This is the date range of your test. Facebook recommends that the test runs for a month or more — but no less than two weeks. They need enough time to get results to find answers.

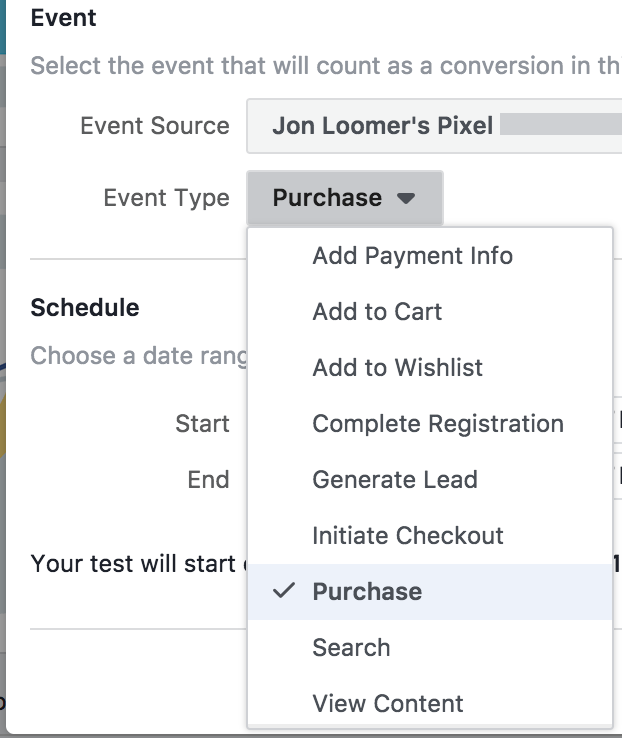

As you can see below, the event source can be a standard event from your Facebook pixel…

If you select a standard event, these campaigns will be compared based on total purchases, for example, of any products on your website (not just those products that were promoted). In this case, you’ll obviously need the Facebook pixel installed on your website with pixel events.

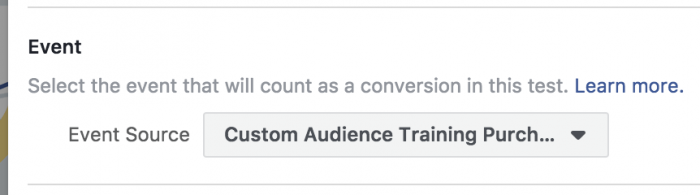

But you can also select an app event, offline event, or custom conversion.

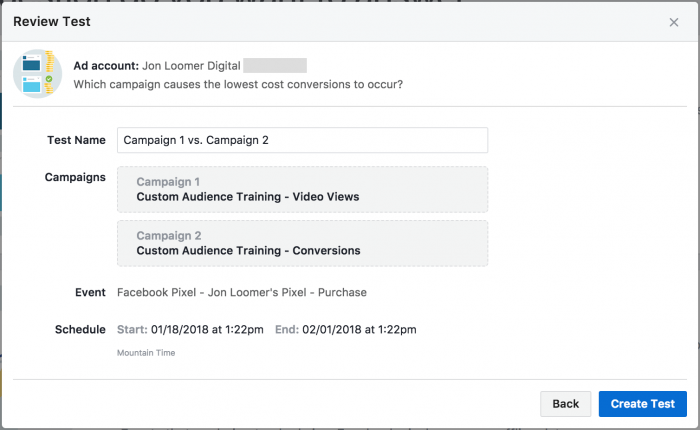

Click “Review” to take a final look…

If you’re ready to start your test, click “Create Test.”

Campaign Comparison Test: Review Results

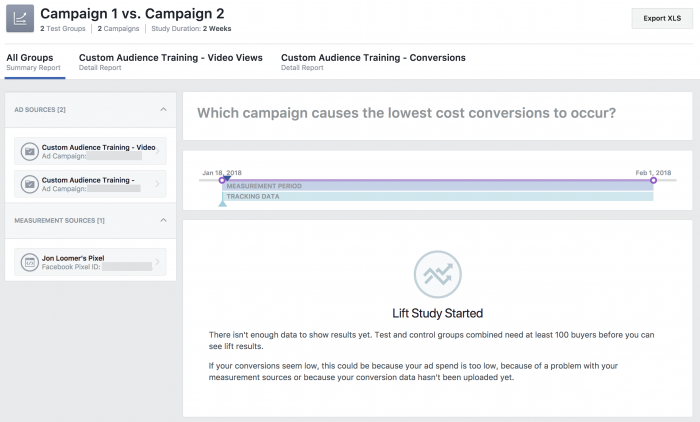

The “Learn” tab will include a list of your tests with columns for the test names, test questions, status (active, planned, completed), and test schedule.

If you click the name of the Campaign Comparison Test, it will bring up a page for results — if you have any.

In the example above, my test had just begun, so I don’t have results. But when I do, I’ll be able to click on the individual campaigns to view them separately as well as view the comparison in this main view.

I only began testing this tool, so I don’t yet have results to “Learn” from. However, Facebook does provide some examples in their documentation.

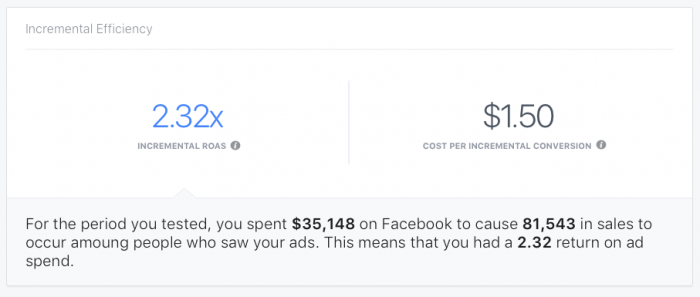

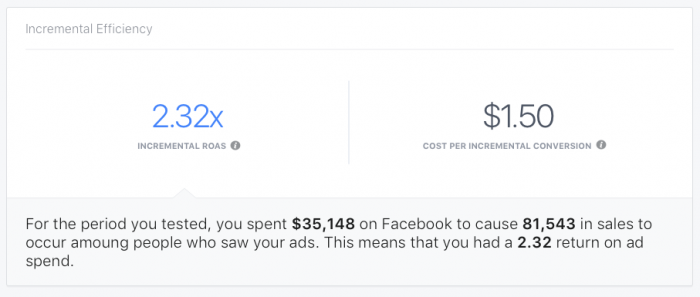

There is an Incremental Efficiency section…

This comparison shows the cost per incremental conversion of each campaign, calculated by dividing the number of incremental conversions for each campaign by the total amount spent during the test.

There is also an Incremental Efficiency section for each campaign. It shows the Return on Ad Spend (ROAS) and cost per incremental conversion.

Simple math in the above example as Jasper’s Market brought in $81,543 for a given campaign test group after spending $35,148 — a 2.32 ROA.

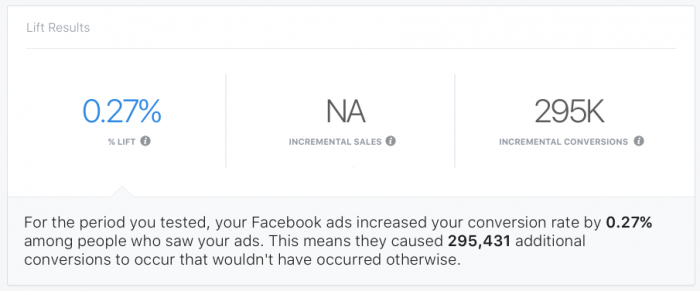

The Lift Results section for individual campaigns shows sales lift, incremental sales, and incremental conversions driven by the campaign.

In this example, conversions would have happened with or without a particular campaign. However, this campaign resulted in 295,000 more conversions for a lift of .27%.

When your test is complete, you will also be able to export results.

Ad Account Test: Overview

This test works much like the Campaign Comparison Test, except that we’re looking at the impact of the entire ad account. The test runs as follows…

1. Test Group 1: Random sampling of people who saw ads from your ad account during the test.

2. Control Group 1: Random sampling of people in the target audience who were held back from seeing your ads during the test.

Facebook then compares the number of conversions that result from both groups to calculate the conversion lift.

If you want to get into the technical weeds, you can read this documentation for more details on Facebook’s testing methodology.

Ad Account Test: Set Up

The Ad Account Test is set up mostly the same way as the Campaign Comparison Test with one obvious difference: You don’t provide two campaigns to compare.

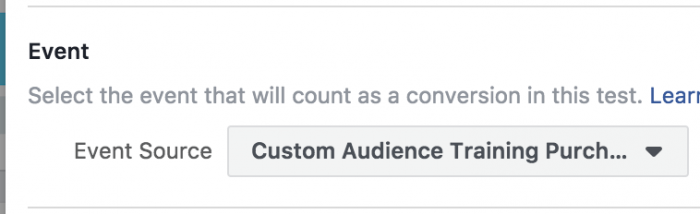

You still need to select a conversion to test against. That could be a standard event…

And that could be an offline event, app event, or custom conversion.

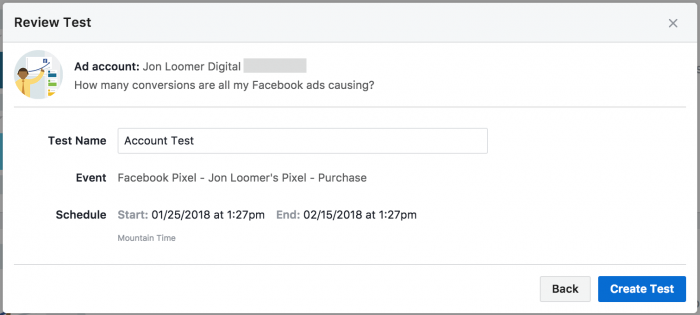

Click to review…

If you’re good to go, click “Create Test.”

Ad Account Test: Review Results

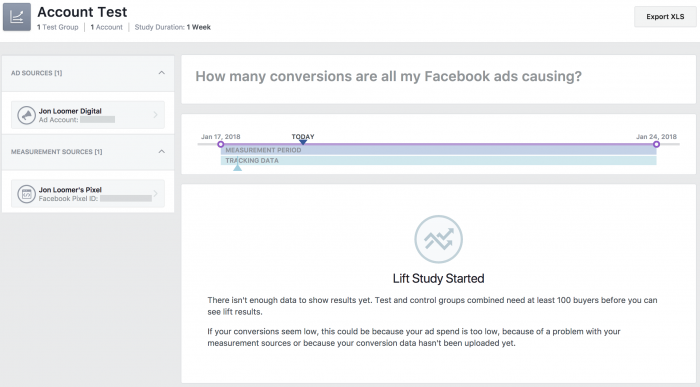

If you click on the Ad Account Test link, it will bring up a results page that looks similar to the report for the Campaign Comparison Test…

Once again, I don’t have any results yet, so let’s go to Facebook’s examples.

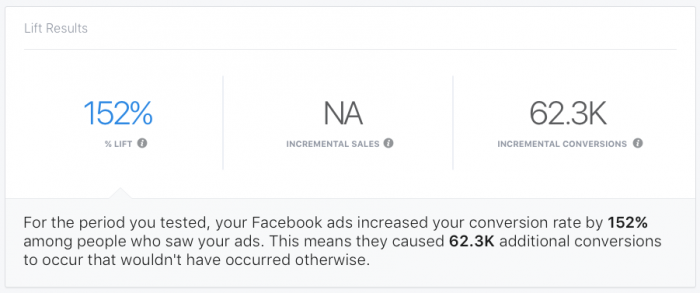

Lift Results will show the percentage of conversion rate lift, incremental sales, and incremental conversions.

In the example above, ads increased a company’s conversion rate by 152%, causing 62.3K conversions that wouldn’t have happened without the ads.

The Incremental Efficiency section shows incremental Return on Ad Spend and cost per incremental conversion.

In the above example, the brand spent $35,148 to get $81,543 in sales, a 2.32 ROAS (I know, it’s the same example as was provided for the Campaign Comparison Test — I can only share what I’m given).

What to do with Results?

When running the Campaign Comparison Test, you should be comparing two very similar campaigns that are different in one specific way (creative, objective, optimization, placement, etc.).

As mentioned earlier, the Cost Per Conversion in your ad reports doesn’t tell the whole story. If one campaign is resulting in more conversion lift than another, it could provide valuable insight into creative, objective, optimization, or placement — not just for these campaigns, but for others.

The Ad Account Test can help you understand the overall health of your advertising. Sure, your ads are currently leading to sales. But would those sales have happened without your ads? What’s the overall lift? If you determine that your lift or ROA aren’t acceptable, you should test individual campaigns against one another to help improve your results.

Your Turn

Do you have Test and Learn yet? Have you created any tests? I’d love to hear about your results.

Let me know in the comments below!